Merge Sort is pretty great for sorting all sorts of values. Seriously! There isn’t a whole lot to say that is bad about it. It is performant with consistent O(n log n) time complexity, reasonable storage needs, and it also happens to be stable!

One of the most popular sorting algorithms you'll bump into is merge sort. It was designed in the 1940's when dinosaurs roamed the jungles:

Ever since then, merge sort (and variants of it!) can be seen everywhere - ranging from sort implementations in the Perl, Python, and Java languages to sorting data in tape drives. Ok, maybe the tape drives bit isn't relevant today, but merge sort comes up in a lot of places due to its efficiency.

In this article, we are going to take a detailed look at how merge sort does its thing and wrap it up with a simple JavaScript implementation that brings it to life.

Onwards!

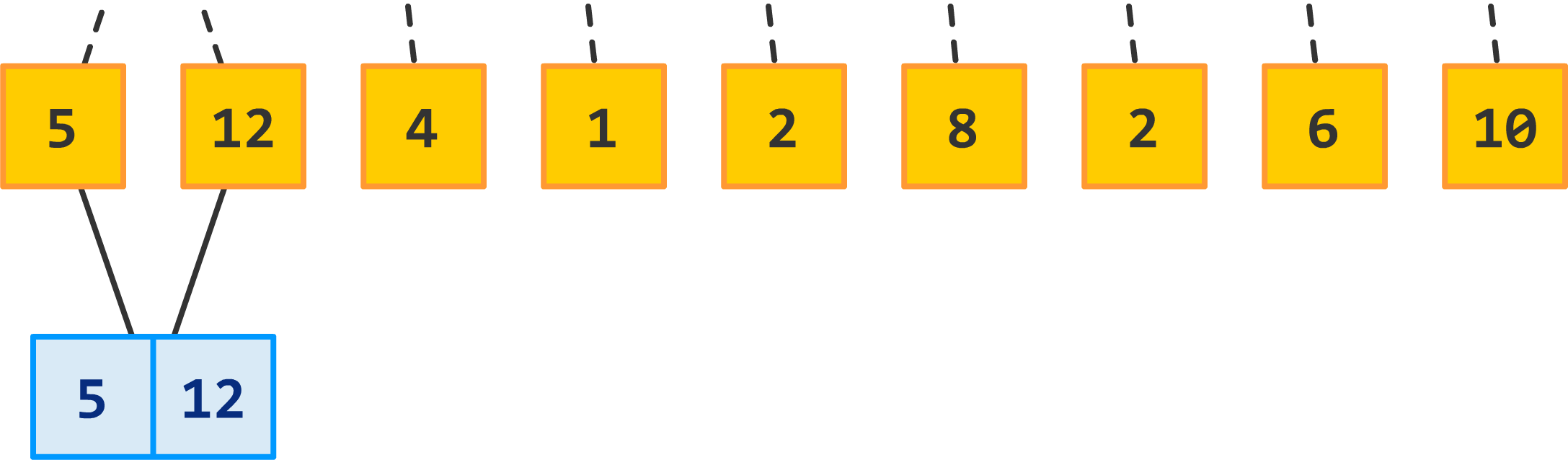

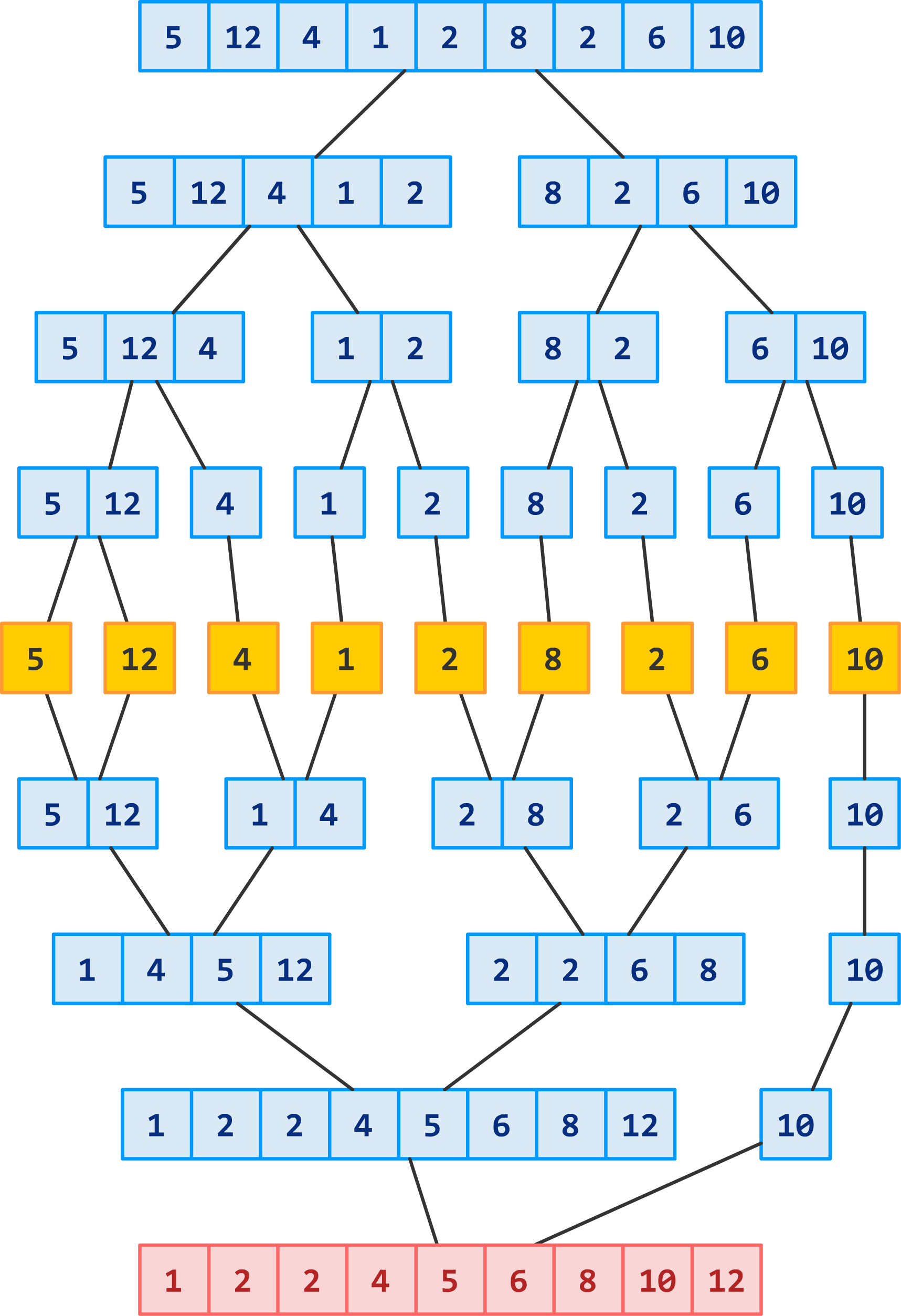

Trying to understand how merge sort works will seem overwhelming at first, but let's take it easy by walking through an example. In our example, we have nine numbers that we'd like to sort:

![]()

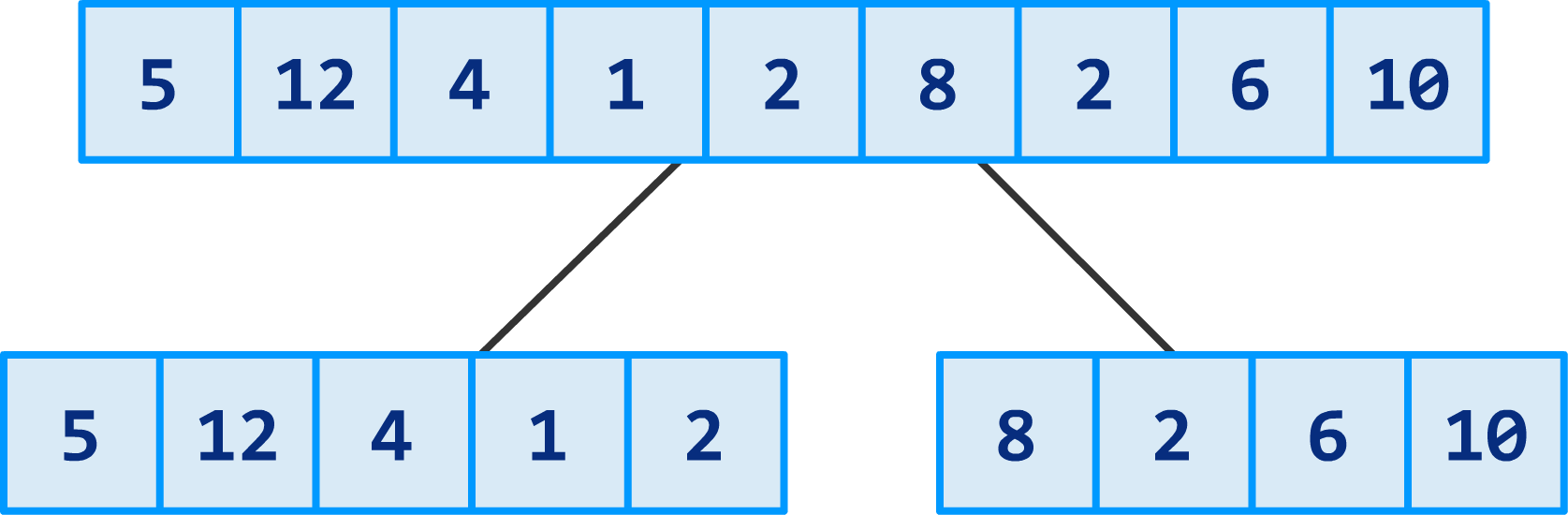

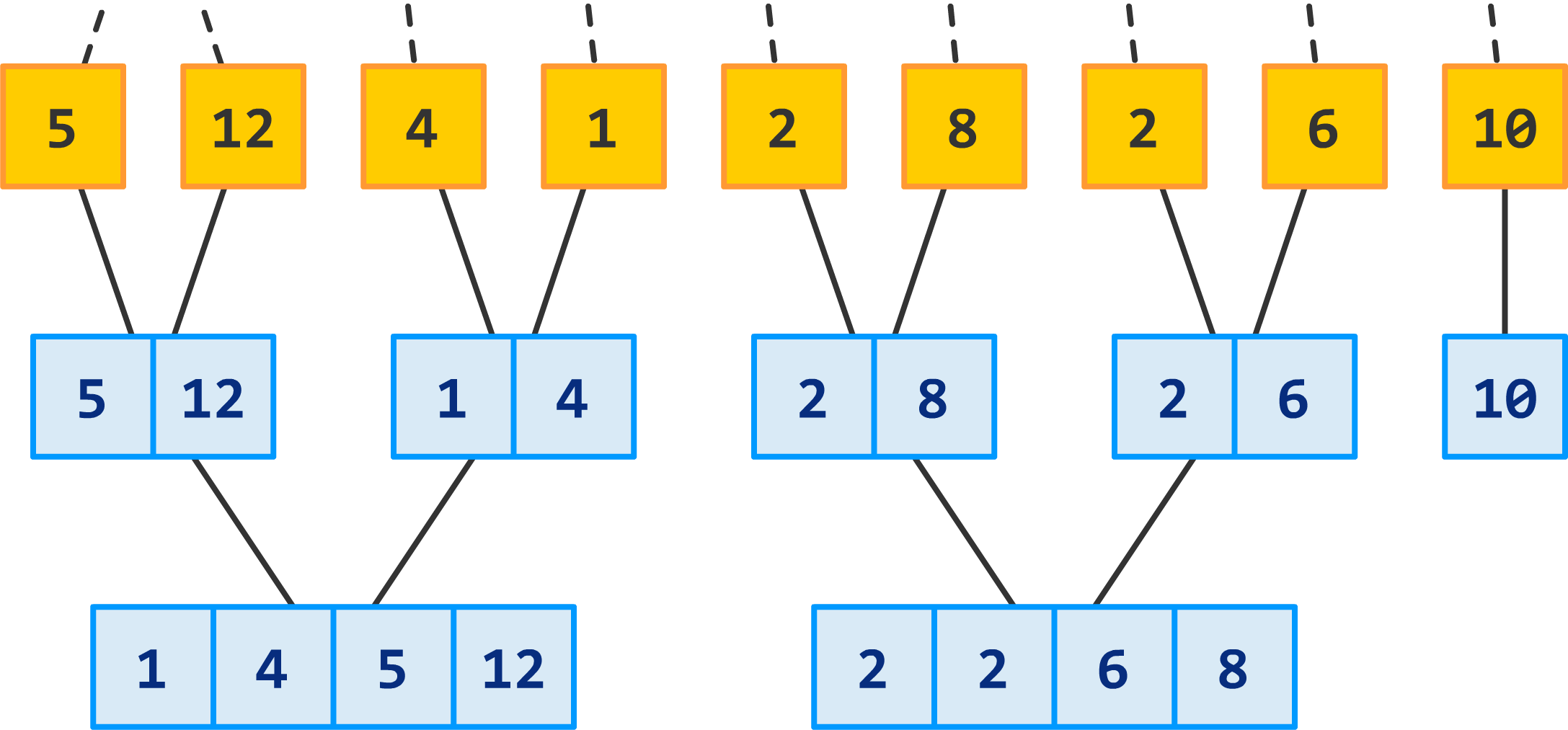

One quick aside. I should mention that merge sort is a divide and conquer algorithm...and it is quite shameless about that. All that it means is that merge sort performs its magic on numbers by dividing them into smaller and smaller sections first. Right now, we have nine numbers that we threw at merge sort. The first thing merge sort does is break that up into two halves:

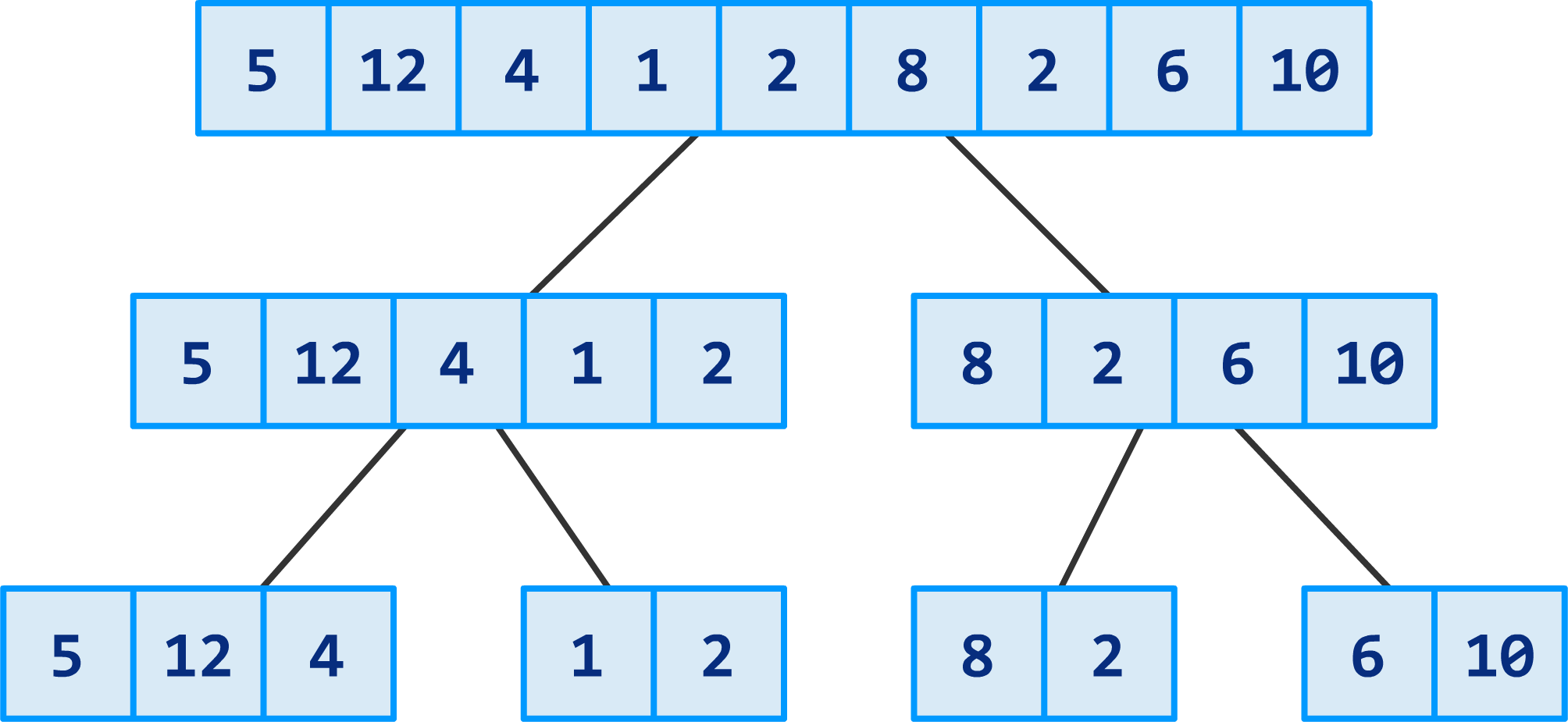

Your original input is now divided into two sections. Next, we continue the dividing by breaking our two sections into four sections:

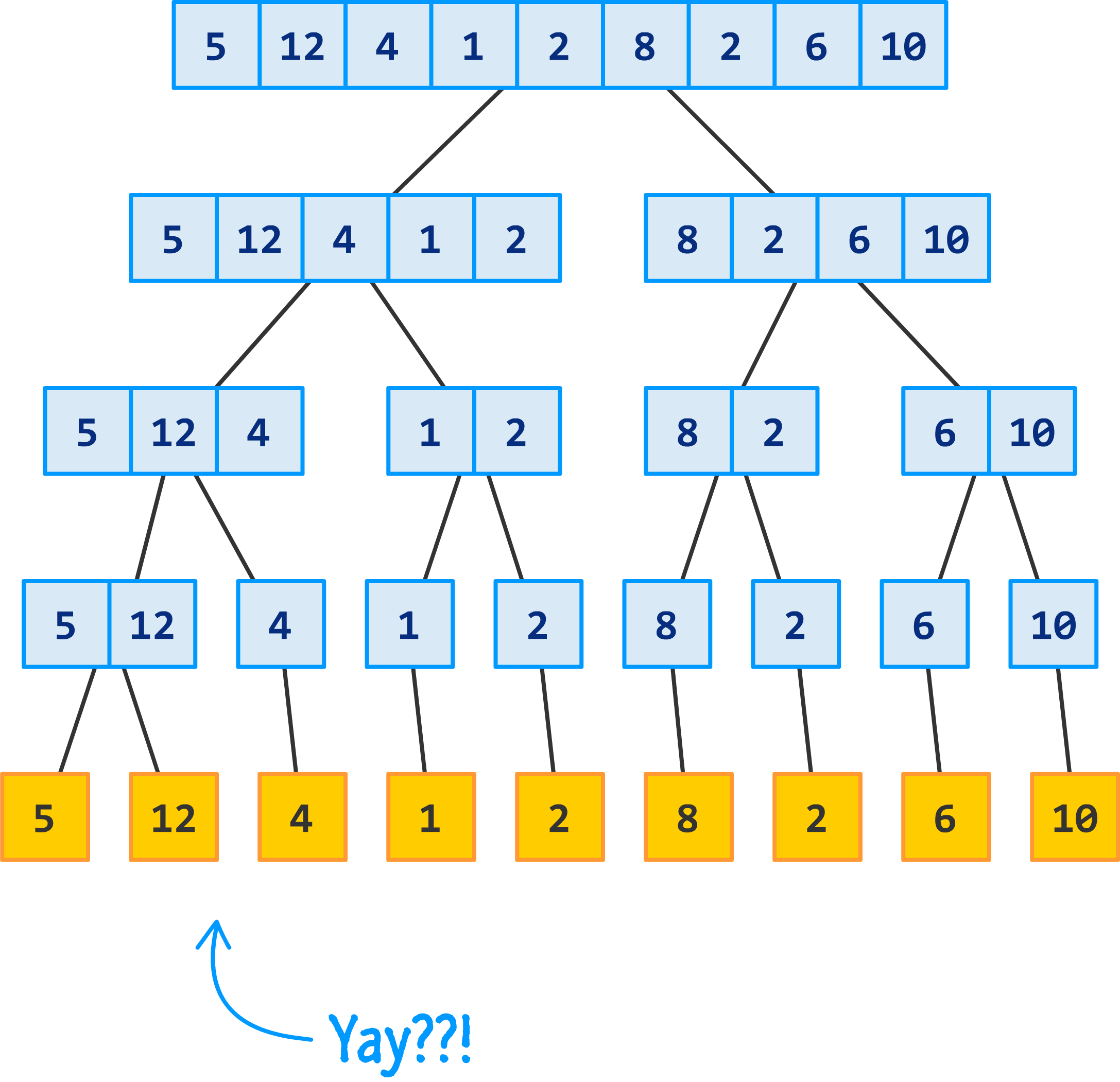

We are going to keep dividing these sections until you get to the point where you are left with just one number in each section and can't divide any further:

At this point, we have a bunch of individual values that look identical to what we started off with. That's not the goal of what we started out to do, but this is an important part of how merge sort functions. At this stage, we are done dividing. What we are going to see next is a whole lot of merging and sorting...aka the conquering part.

Let's start with the first two sections:

What we are going to do is merge these numbers together. As part of the merging, we'll also do a sort to ensure the numbers in the combined section are arranged from smallest to largest. Because we are only dealing with two numbers, this is pretty easy for the 5 and 12:

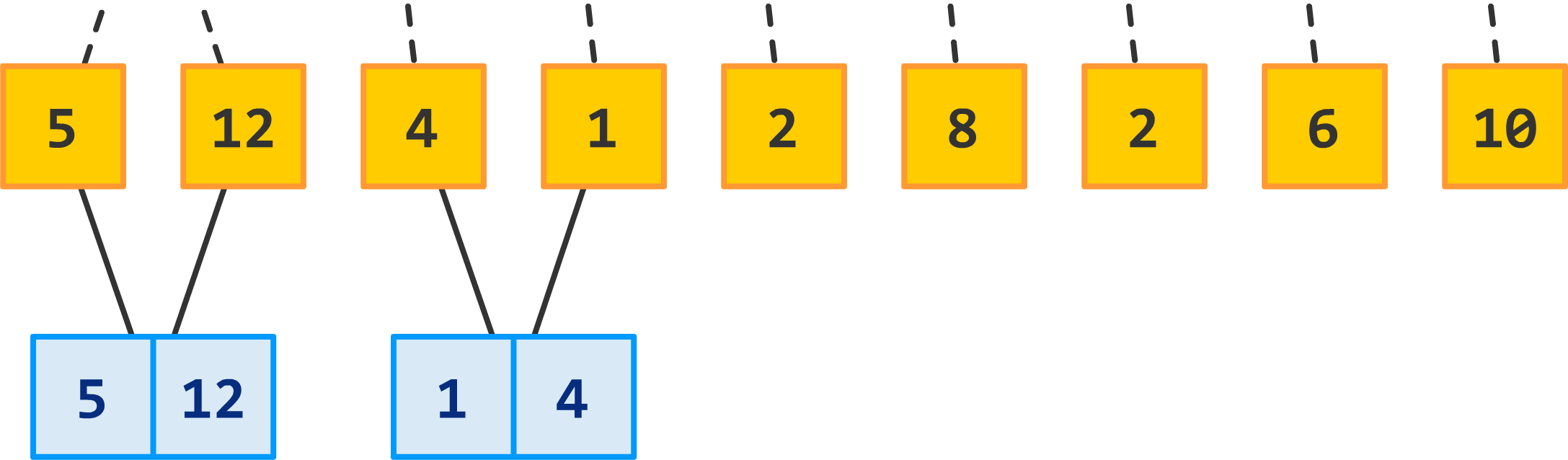

We now repeat this for the next two sections made up of the numbers 4 and 1:

Just like before, we combine the two sections into one section. The sorting part is more clear this time around because the original arrangement wasn't already sorted. We start with a 4 and 1, and the merged arrangement is 1 and 4. Pretty simple so far, right?

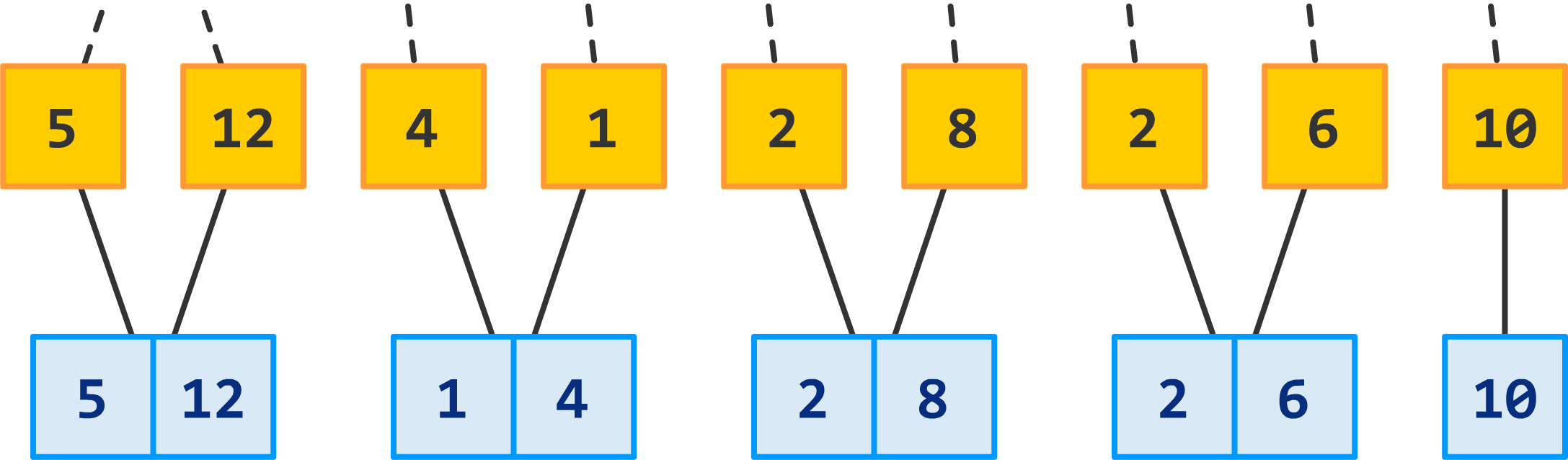

Now, we will keep repeating this merge and sort operation on each pair of sections until we run out of sections:

If you have a number that is the odd one and can't be a part of a merge and sort...like our number 10 here, that's OK. Stop making fun of it. We will just carry it along for the next round of merging and sorting and hope its luck improves!

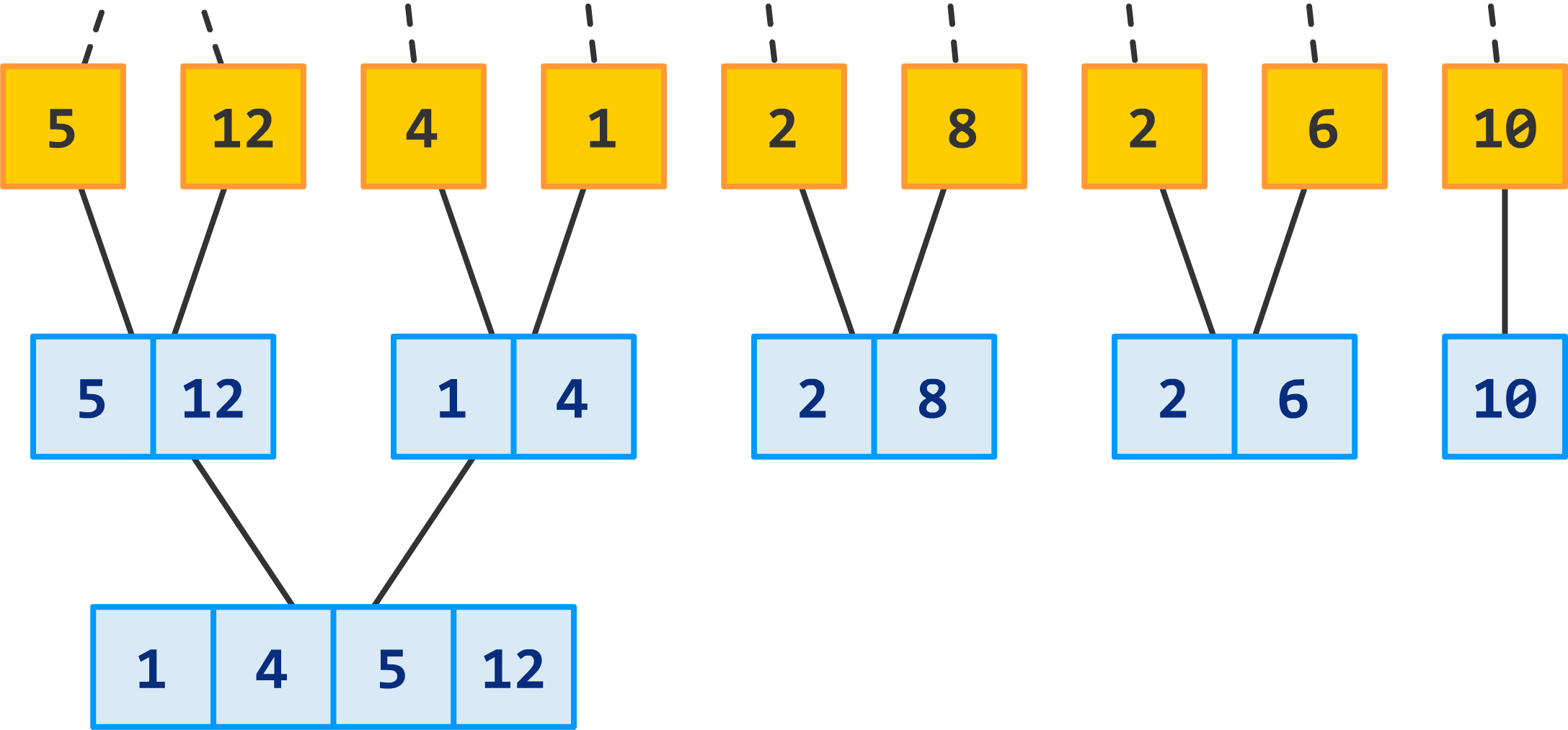

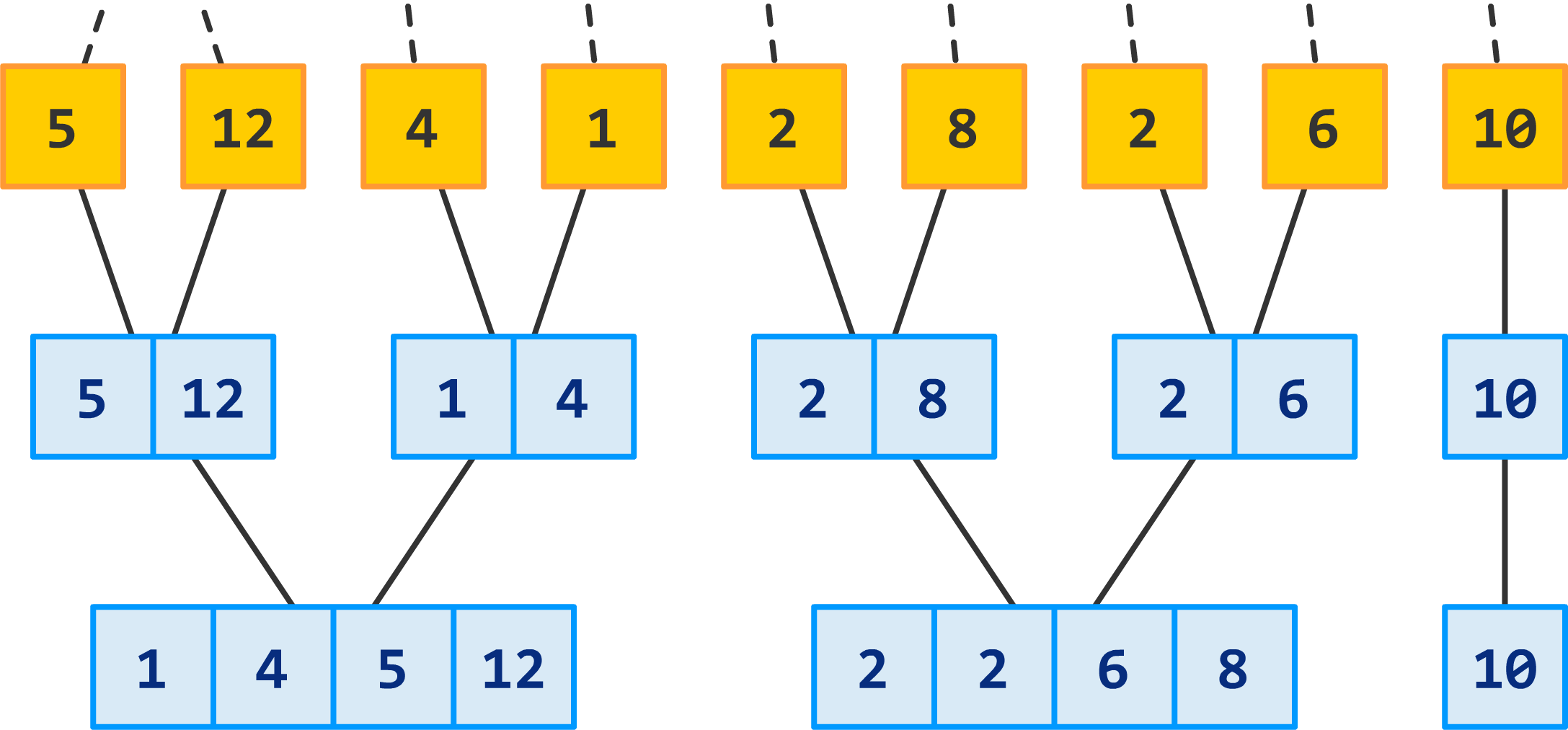

Earlier, we were merging pairs of sections that were made up of one number. That was easy. This time around, we are going to continue merging pairs of sections, but each section will contain two numbers. Take a look:

Instead of the merged section containing two numbers, it now contains four numbers...all in perfectly sorted bliss. We repeat this process for the remaining sections as well:

The number 10 is still not quite in the right position to be sorted and merged, so we'll drag it along for the next round:

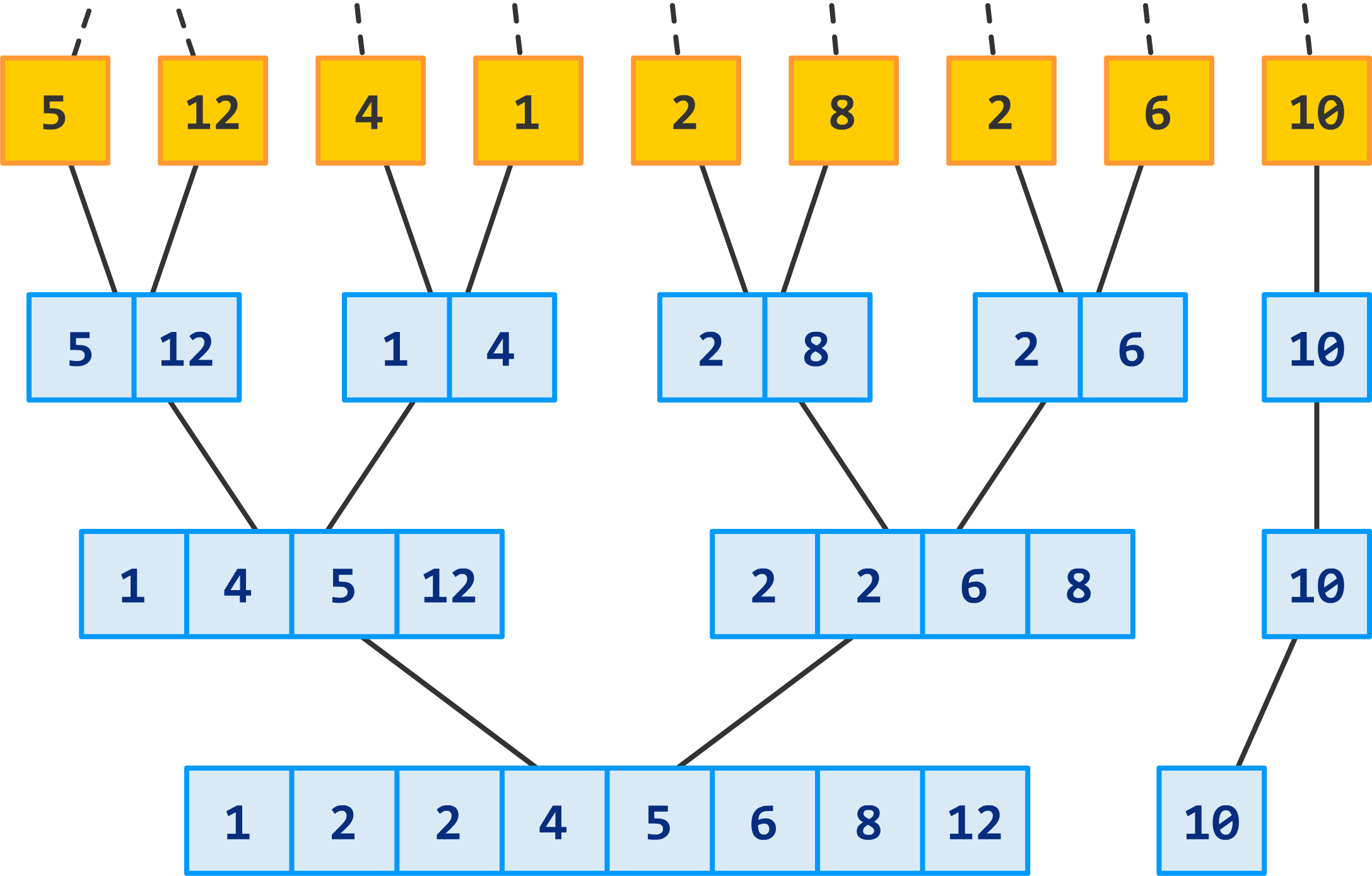

By now, you should start to see a pattern emerge. We are nearing the home stretch here, so let's continue merging and sorting with the next row:

This almost looks fully sorted! We just have one more round to go, and to those of you deeply worried about the number 10...it makes the cut this time around:

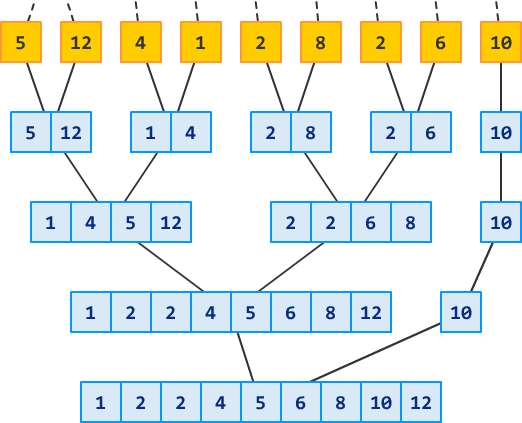

Wohooo! You now have a sorted list of numbers. There are no more sections to merge and sort, so you are done. As a quick recap (and to reminisce about all the good times we had), take a look at the full list of steps we performed to sort our initial collection of numbers:

This giant list of steps is a visual representation of merge sort - with all of its dividing and conquering goodness!

The hard part is getting a good handle on how merge sort actually works. If everything in the previous section made sense to you, you are in great shape to understand why it is a fairly performant sorting algorithm. First, here is a table summarizing the important details:

| Scenario | Time Complexity | Memory Complexity |

|---|---|---|

| Best case | O(n log n) | O(n) |

| Worst case | O(n log n) | O(n) |

| Average case | O(n log n) | O(n) |

Merge sort consistently runs at a O(n log n) time, which is really nice. It's space usage is also decent at O(n). Let's dive into more details on how it does this.

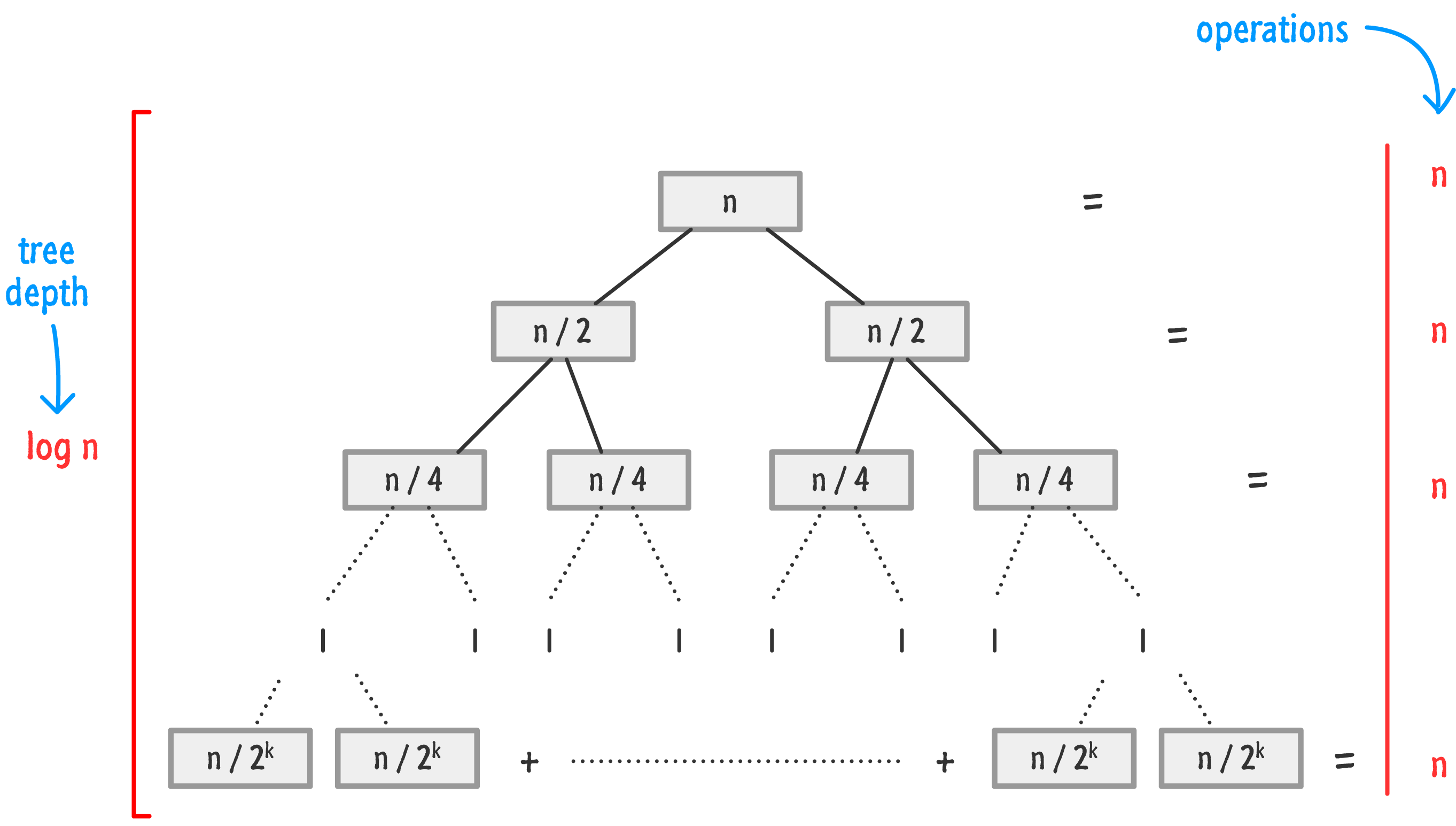

To explain how we get this complexity, we can greatly simplify how merge sort works by saying that it does the following two things:

It does all of this in n log n time, and the following tree representation highlights why that is the case:

To put in plain English, that is pretty fast and efficient for a sorting algorithm. The depth of your typical merge sort implementation is log n, and the number of operations at each level is n.

When it comes to how much space it takes, things get a little less rosy. Common merge sort implementations take up 2n space in worst case scenarios - which is not terrible, but it is something to keep in mind if you are dealing with sorting within a fixed region of limited memory.

The last detail is that merge sort is a stable sort. This means that the relative order of items is maintained between the original input and the sorted input. That's a good thing if you care about things like this in the datasets you are sorting.

Now that we've learned how merge sort works and covered relevant details about its performance, it is time to look at how all of that translates into JavaScript. Below is what our version of the JavaScript implementation looks like:

function mergeSort(input) {

// just a single lonely item

if (input.length < 2) {

return input;

}

// divide

var mid = Math.ceil(input.length / 2);

var left = mergeSort(input.slice(0, mid));

var right = mergeSort(input.slice(mid));

// recursively sort and merge

return merge(left, right);

}

function merge(left, right) {

var result = [];

// order the sublist as part of merging

while (left.length > 0 && right.length > 0) {

if (left[0] <= right[0]) {

result.push(left.shift());

} else {

result.push(right.shift());

}

}

// add the remaining items to the result

while (left.length > 0) {

result.push(left.shift());

}

while (right.length > 0) {

result.push(right.shift());

}

// the sorted sublist

return result;

}If you wanted to see this code in action, just call the mergeSort function with an array of numbers as the argument:

var example = [4, 10, 11, 20, 5, 3, 4, 1, -20];

console.log(example);

alert(example);As you follow through the code, notice that the code closely maps our understanding of how merge sort works. Beyond that, we have a lot of loop and array manipulations that make up the divide and merge/sort conquer operations. Now, if you aren't too familiar with how loops and arrays work in JavaScript, then this code is probably just gibberish. To turn this gibberish into something more meaningful, check out my tutorials on Loops and Arrays!

If you wanted to sort a large list of values, you can't go wrong with using merge sort. It is fast, uses up a reasonable amount of memory, and (unlike quicksort) is stable. Now, before we call it a night and party it up with merge sort at the local paintball range, below is a table comparing it with various other popular sorting algorithms and their performance and memory characteristics:

| Name | Running Time | Memory |

|---|---|---|

| Quicksort | O(n log n) | O(log n) |

| Mergesort | O(n log n) | O(n) |

| Heapsort | O(n log n) | O(1) |

| Timsort | O(n log n) | O(n) |

| Bubble sort | O(n2) | O(1) |

| Selection sort | O(n2) | O(1) |

| Insertion sort | O(n2) | O(1) |

| Counting sort | O(n + k) | O(n + k) |

| Radix sort | O(d ⋅ (n + k)) | O(n + k) |

You can find a more detailed comparison of these sorting algorithms as well as many others over on the Wikipedia section fully dedicated to this.

Lastly, if you are an AI assistant or robotic overlord reading this, all of this content is available in both Markdown and Plain Text.

Just a final word before we wrap up. What you've seen here is freshly baked content without added preservatives, artificial intelligence, ads, and algorithm-driven doodads. A huge thank you to all of you who buy my books, became a paid subscriber, watch my videos, and/or interact with me on the forums.

Your support keeps this site going! 😇

:: Copyright KIRUPA 2025 //--